In this post, I'm not going to talk about ASP.NET Core for a change. Instead, I'm going to show one way to package CLI tools and their dependencies as Docker images. With a simple helper script, this allows you to run a CLI tool without having to install the dependencies on your host machine. I'll show how to create a Docker image containing your favourite CLI tool, and a helper script for invoking it.

All the commands in this post describe using Linux containers. The same principal can be applied to Windows containers if you update the commands. However the benefits of isolating your environment come with the downside of large Docker image sizes.

If you're looking for a Dockerised version of the AWS CLI specifically, I have an image on Docker hub which is generated from this GitHub repository.

The problem: dependency hell

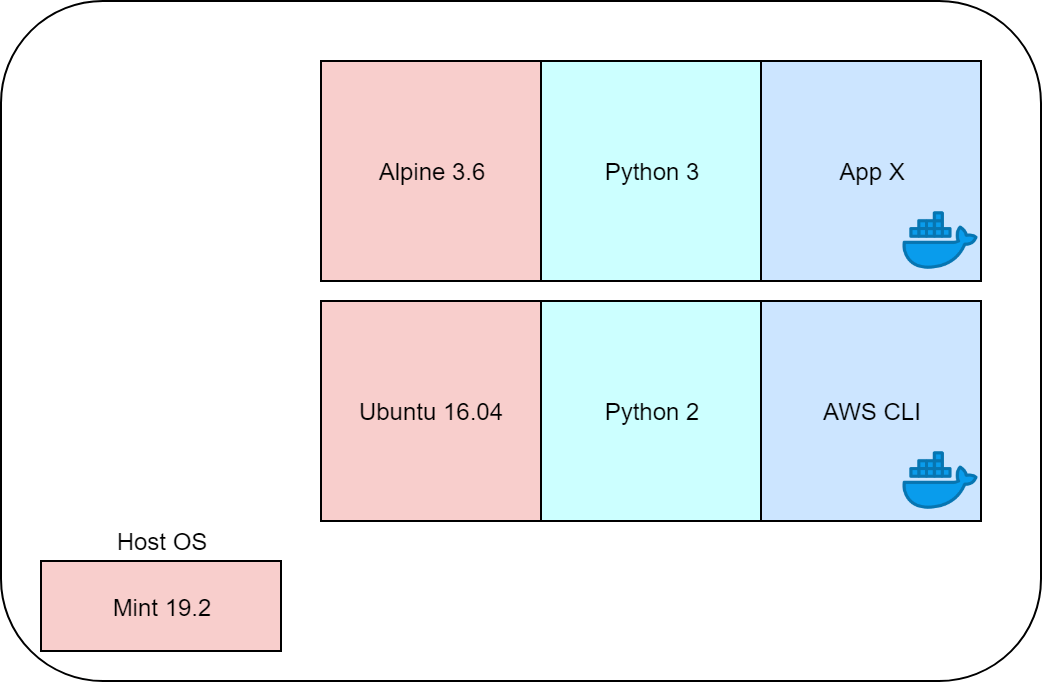

For example, take the AWS CLI. The suggested way to install the CLI on Linux is to use Python and pip (Pip is the package installer for Python; the equivalent of NuGet for .NET). The recommended version to use is Python 3, but you may have other apps that require Python 2, at which point you're in a world of dependency hell.

Docker containers can completely remove this problem. By packaging all the dependencies of an application into a container (even the operating system) you isolate the apps from both your host machine, and other apps. Each container runs in its own little world, and can have completely different dependencies to every other and the host system.

This is obviously one of the big selling points of containers, and is part of the reason they're seeing such high adoption for production loads. But they can also help with our AWS CLI problem. Instead of installing the CLI on our host machine, we can install it in a Docker container instead, and execute our CLI commands there.

Creating a Docker image for the AWS CLI

So what does it actually take to package up a tool in a Docker container? That depends on the tool in question. Hopefully, the installation instructions include a set of commands for you to run. In most cases, if you're at all familiar with Docker you can take these commands and convert them into a Dockerfile.

For example, let's take the AWS CLI instructions. According to the installation instructions, you need to have Python and pip installed, after which you can run

pip3 install awscli --upgrade --user

to install the CLI.

One of the main difficulties of packaging your app into a Docker container, is establishing all of the dependencies. Python and pip are clearly required, but depending on which operating system you use for your base image, you may find you need to install additional dependencies.

Alpine Linux is a common candidate for a base OS as it's tiny, which keeps your final Docker images as small as possible. However Alpine is kept small by not including much in the box. You may well find you need to add some extra dependencies for your target tool to work correctly.

The example Dockerfile below shows how to install the AWS CLI in an Alpine base image. It's taken from the aws-cli image which is available on Docker Hub:

FROM alpine:3.6

RUN apk -v --no-cache add \

python \

py-pip \

groff \

less \

mailcap \

&& \

pip install --upgrade awscli==1.16.206 s3cmd==2.0.2 python-magic && \

apk -v --purge del py-pip

VOLUME /root/.aws

VOLUME /project

WORKDIR /project

ENTRYPOINT ["aws"]

This base image uses Alpine 3.6, and starts by installing a bunch of prerequisites:

python: the Python (3) environmentpy-pip: thepippackage installer we need to install the AWS CLIgroff: used for formatting textless: used for controlling the amount of text displayed on a terminalmailcap: used for controlling how to display non-text

Next, as part of the same RUN command (to keep the final Docker image as small as possible) we install the AWS CLI using pip. We also install the tool s3cmd (which makes it easier to work with S3 data), and python-magic (which helps with mime-type detection).

As the last step of the RUN command, we uninstall the py-pip package. We only needed it to install the AWS CLI and other tools, and now it's just taking up space. Deleting (and purging) it helps keep the size of the final Docker image down.

The next two VOLUME commands define locations known by the Docker container when it runs on your machine. The /root/.aws path is where the AWS CLI will look for credential files. The /project path is where we set the working directory (using WORKDIR), so it's where the AWS CLI commands will be run. We'll bind that at runtime to wherever we want to run the AWS CLI, as you'll see shortly.

Finally we set the ENTRYPOINT for the container. This sets the command that will run when the container is executed. So running the Docker container will execute aws, the AWS CLI.

To build the image, run docker build . in the same directory as Dockerfile, and give it a tag:

docker build -t example/aws-cli .

You will now have a Docker image containing the AWS CLI. The next step is to use it!

Running your packaged tool image as a container

You can create a container from your tool image and run it in the most basic form using:

docker run --rm example/aws-cli

If you run this, Docker creates a container from your image, executes the aws command, and then exists. The --rm option means that the old container is removed afterwards, so it doesn't clutter up your drive. In this example, we didn't provide any command line arguments, so the AWS CLI shows the standard help text:

> docker run --rm example/aws-cli

usage: aws [options] <command> <subcommand> [<subcommand> ...] [parameters]

To see help text, you can run:

aws help

aws <command> help

aws <command> <subcommand> help

aws: error: too few arguments

If you want to do something useful, you'll need to provide some arguments to the CLI. For example, lets try listing the available S3 buckets, by passing the arguments s3 ls:

> docker run --rm example/aws-cli s3 ls

Unable to locate credentials. You can configure credentials by running "aws configure".

This is where things start to get a bit more tricky. To call AWS, you need to provide credentials. There are a variety of ways of doing this, including using credentials files in your profile, or by setting environment variables. The easiest approach is to use environment variables, by exporting them in your host environment:

export AWS_ACCESS_KEY_ID="<id>"

export AWS_SECRET_ACCESS_KEY="<key>"

export AWS_SESSION_TOKEN="<token>" #if using AWS SSO

export AWS_DEFAULT_REGION="<region>"

And passing these to the docker run command:

docker run --rm \

-e AWS_ACCESS_KEY_ID \

-e AWS_SECRET_ACCESS_KEY \

-e AWS_DEFAULT_REGION \

-e AWS_SESSION_TOKEN \

example/aws-cli \

s3 ls

I split the command over multiple lines as it's starting to get a bit unwieldy. If you have your AWS credentials stored in credentials files instead in $HOME/.aws instead of environment variables, you can pass those to the container using:

docker run --rm \

-v "$HOME/.aws:/root/.aws" \

example/aws-cli \

s3 ls

In these examples, we're just listing out our S3 buckets, so we're not interacting with the file system directly. But what if you want to copy a file from a bucket to your local file system? To achieve this, you need to bind your working directory to the /project volume inside the container. For example:

docker run --rm \

-v "$HOME/.aws:/root/.aws" \

-v $PWD:/project \

example/aws-cli \

s3 cp s3://mybucket/test.txt test2.txt

In this snippet we bind the current directory ($PWD) to the working directory in the container /project. When we use s3 cp to download the test.txt file, it's written to /project/test2.txt in the container, which in turn writes it to your current directory on the host.

By now you might be getting a bit fatigued - having to run such a long command every time you want to use the AWS CLI sucks. Luckily there's easy fixes by using a small script

Using helper scripts to simplify running your containerised tool

Having to pass all those environment variables and volume mounts is a pain. The simplest solution, is to create a basic script that includes all those defaults for you:

#!/bin/bash

docker run --rm \

-v "$HOME/.aws:/root/.aws" \

-v $PWD:/project \

example/aws-cli \

"$@"

Note that this script is pretty much the same as the final example from the previous section. The difference is that we're using the arguments catch-all "$@" at the end of the script, which means "paste all of the arguments here as quoted string".

If you save this script as aws.sh in your home directory (and give it execute permissions by running chmod +x ~/aws.sh), then copying a file becomes almost identical to using the AWS CLI directly:

# Using the aws cli directly

aws.sh s3 cp s3://mybucket/test.txt test2.txt

# Using dockerised aws cli

~/aws.sh s3 cp s3://mybucket/test.txt test2.txt

Much nicer!

You could even go one step further and create an alias for aws to be the contents of the script:

alias aws='docker run --rm -v "$HOME/.aws:/root/.aws" -v $PWD:/project example/aws-cli'

or alternatively, copy the file into your path:

sudo cp ~/aws.sh /usr/local/bin/aws

As ever with Linux, there's a whole host of extra things you could do. You could create different versions of the aws.sh script which is configured to use alternative credentials or regions. But using a Dockerised tool rather than installing the CLI directly on your host means you can also have scripts that use different versions of the CLI. All the while, you've avoided polluting your host environment with dependencies!

Summary

In this post, I showed how you can Dockerise your CLI tools to avoid having to install dependencies in your host environment. I showed how to pass environment variables and arguments to the Dockerised tool, and how to bind to your host's file system. Finally, I showed how you can use scripts to simplify executing your Docker images.

If you're looking for a Dockerised version of the AWS CLI specifically, I have an image on Docker hub which is generated from this GitHub repository (which is a fork of an original which fell out of maintenance).