This post is a short follow-up to my previous two posts on running async tasks in ASP.NET Core. In this post I relay some feedback I've had, both on the examples I used to demonstrate the problem, and the approach I took. The previous posts can be found here and here.

Startup task examples

The most common feedback I've had about the posts is the examples I used to describe the problem. In my first post, I suggested three possible scenarios where you might want to run a task before your app starts up:

- Checking your strongly-typed configuration is valid.

- Priming/populating a cache with data from a database or API

- Running database migrations before starting the app.

The first two options went down ok, but several people have expressed issues with the example of running database migrations. In both posts I pointed out that running database migrations as a startup task for your app may not be a good idea, but then I used it as an example anyway. In hindsight, that was a poor choice on my part…

Database migrations were a poor choice

So what is it about database migrations that make them problematic? After all, you definitely need to have your database migrated before your app starts handling requests! There seem to be three issues:

- Only a single process should run database migrations

- Migrations often require more permissions than a typical web app should have

- People aren't comfortable running EF Core migrations directly

I'll discuss each of those in turn below.

1. Only a single process should run database migrations

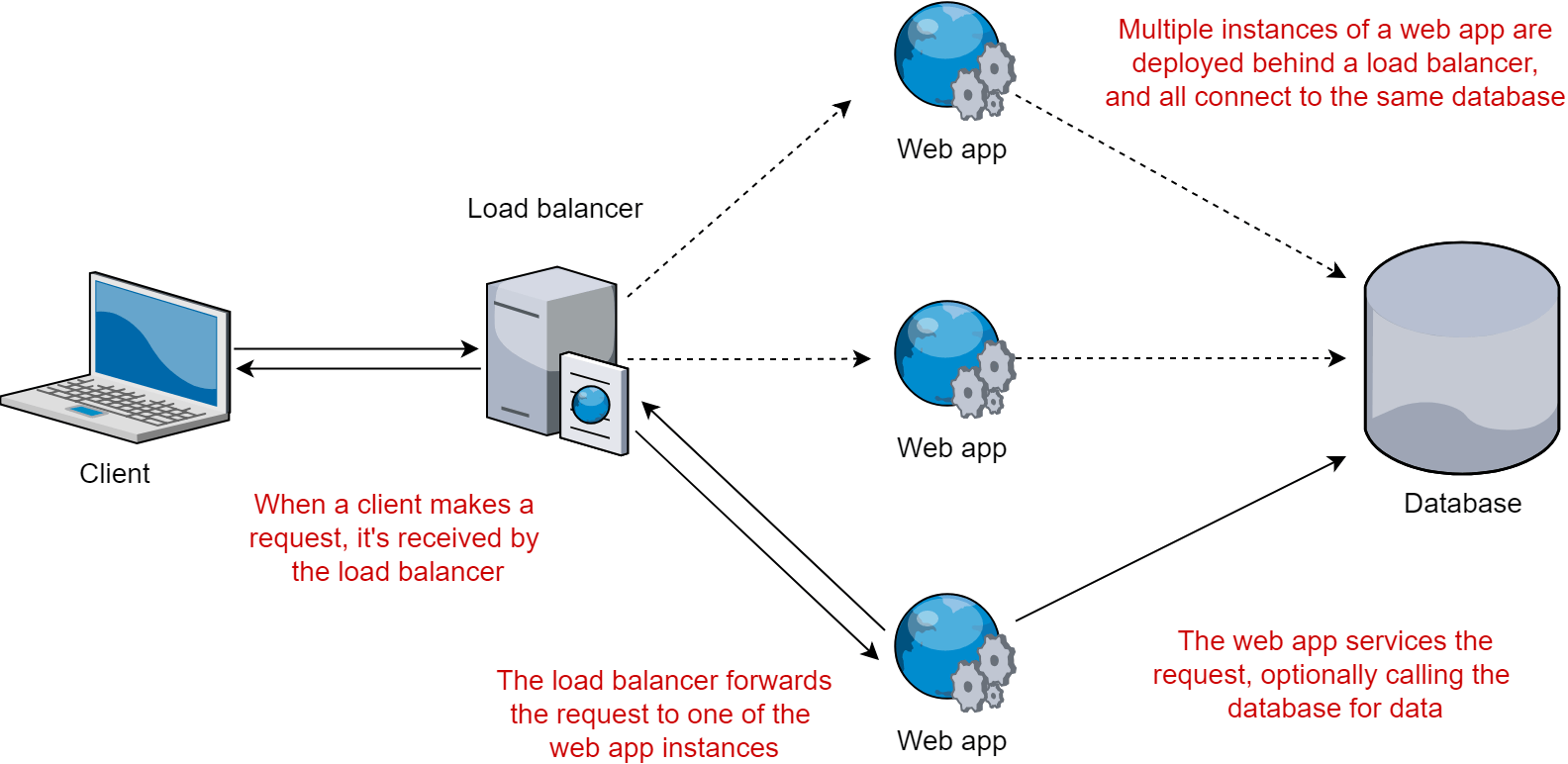

One of the most common ways to scale a web application is to scale it out by running multiple instances, and using a load balancer to distribute requests among them.

This "web farm" approach works well, especially if the application is stateless - requests are distributed among the various apps, and if one app crashes for some reason, the other apps are still available to handle requests.

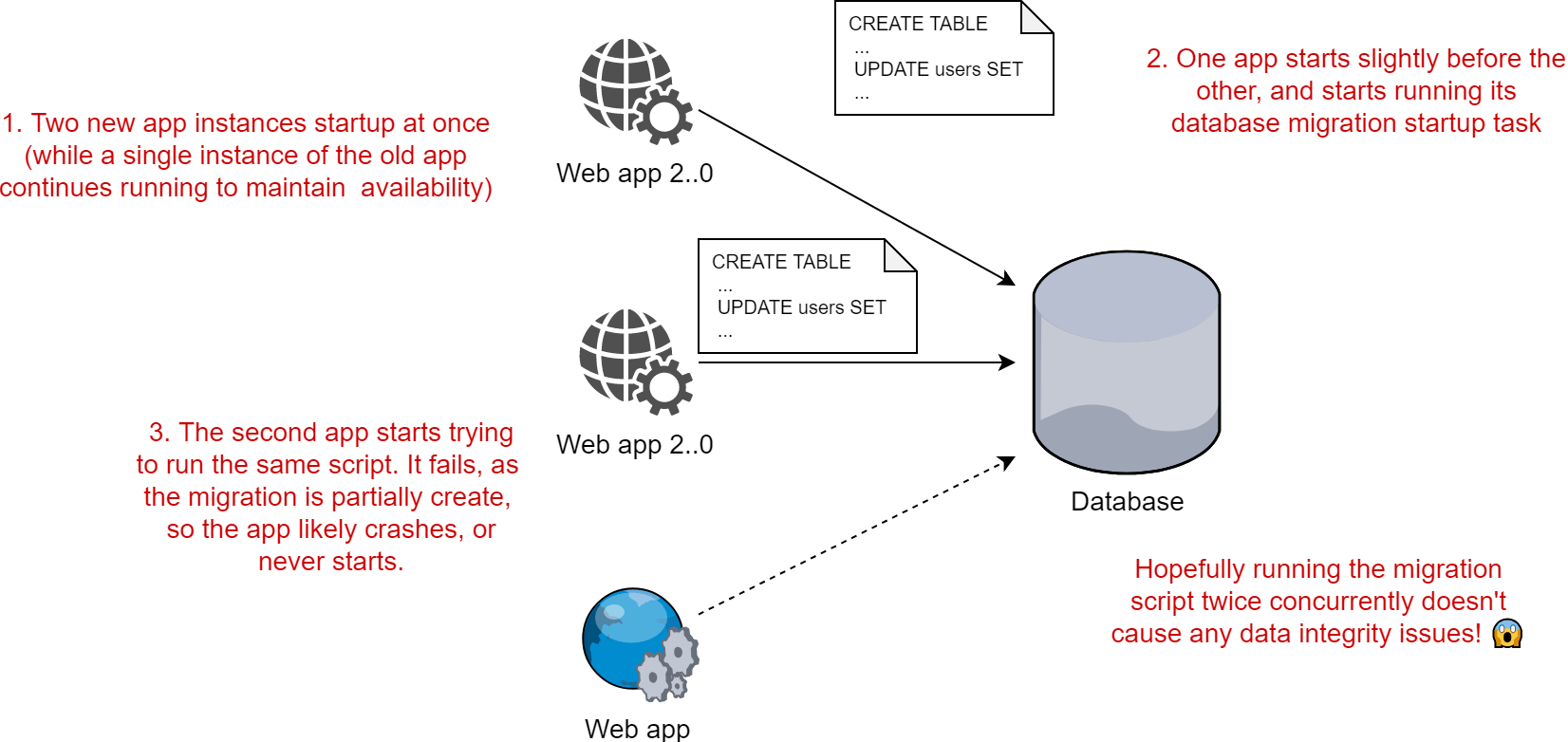

Unfortunately, if you try to run database migrations as part of the deployment process for your application, you will likely run into issues. If more than one instance of your app starts at approximately the same time, multiple database migration tasks can start at once. It's not guaranteed that this will cause you problems, but unless you're extremely careful about ensuring idempotent updates and error-handling, you're likely to get into a pickle.

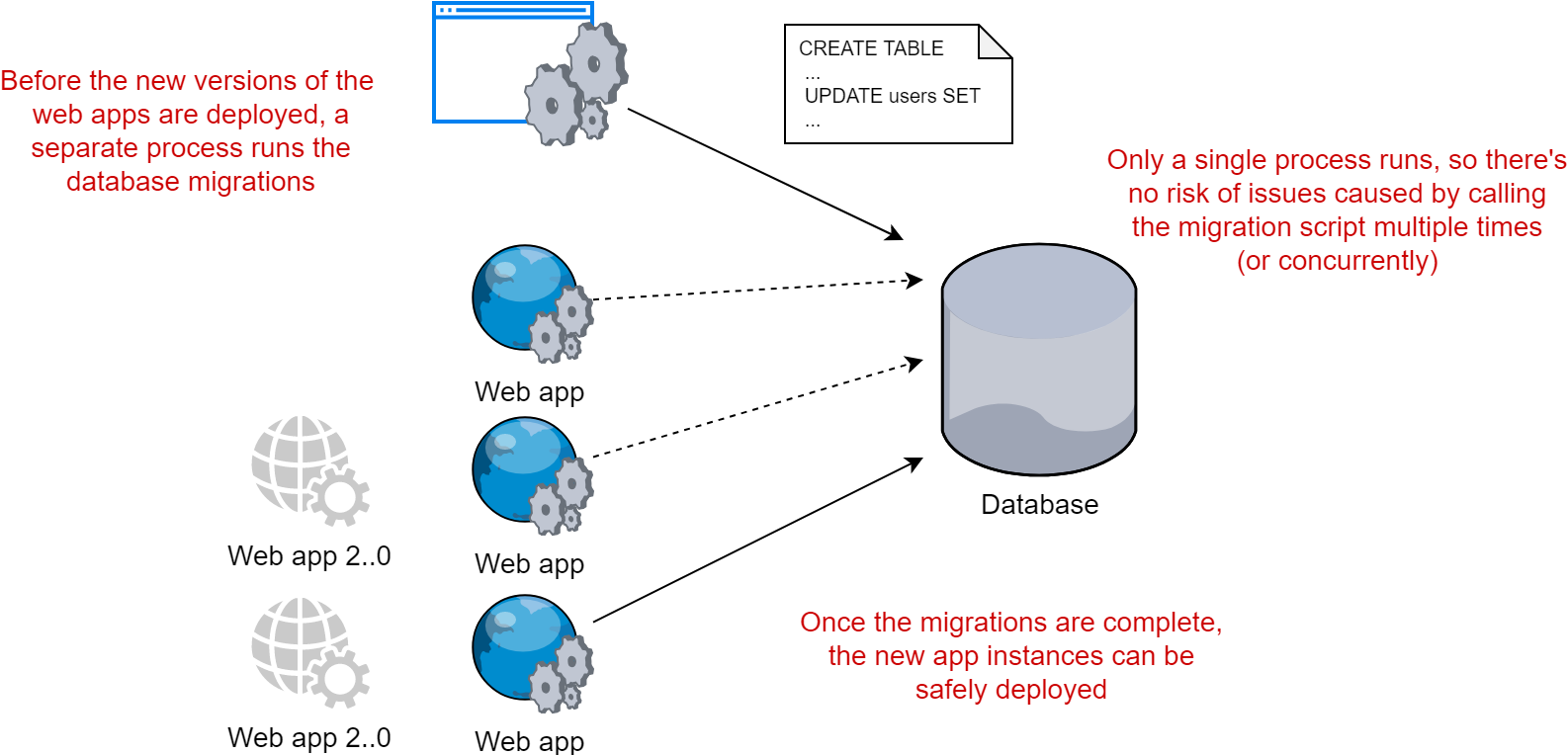

You really don't want to be dealing with the sort of database integrity issues that could arise by using this sort of approach. A much better option is to have a single migration process that runs before the web apps are deployed (or after, depending on your requirements). With a single process performing the database migrations you should be naturally shielded from the worst of the dangers.

This approach feels like more work than running database migrations as a startup task, but it's much safer and easier to get right.

It's not the only option of course. If you have your heart set on the startup task migration idea, then you could use some sort of distributed locking to ensure only a single app instance runs it's migrations at one time. That doesn't solve the second issue however…

2. Migrations often require more permissions than a typical web app should have

It's best practice to limit your applications so that they only have permission to access and modify the resources that they need to. If a reporting application only needs to read sales figures, then it shouldn't be able to modify them, or change the database schema! Locking down the permissions associated with a given connection string can prevent a whole raft of repercussions in the event that your app has a security issue.

If you're using your web app itself to run database migrations, then naturally that web app will require database permissions to do high-risk activities like modifying the database schema, changing permissions, or updating/deleting data. Do you really want your web application to have the ability to drop your students table (thanks XKCD)?

Again, there are some implementation-specific things you can do, like using different connection strings for the migration compared to the normal database access. But you fundamentally can't lock-down the web-app process like you could if you used an external migration process.

3. People aren't comfortable running EF Core migrations directly

This is a much less obvious point, but quite a few people have expressed that using the EF Core migration tools in production might not be a great idea.

I think your article should clarify that running EF migrations in production is not a good idea. Migration frameworks are a good idea to run on start up. 😊

— Khalid 👨🚀 (@buhakmeh) 9 January 2019

Personally, I haven't tried using EF Core migrations in production for over a year, and the tooling has undoubtedly improved since then. Having said that, I still see a few issues:

- Using the EF Core global tools for migrations requires installing the .NET Core SDK, which you shouldn't need on your production servers. You don't have to use this of course (I didn't in my posts for example).

- Running additional SQL scripts as well as the ones generated by EF Core was tricky last time I tried, EF Core would get upset. That could well be improved now though, or I may have been doing something wrong before!

- If you want to safely update databases, you'll probably have to do some editing of the generated scripts anyway. The database schema after a migration should be compatible with existing (running) applications to avoid down time.

- The Microsoft docs themselves hint that it's not a good idea to run EF Core migrations on app startup!

Rather than use EF Core migrations, I've personally used DbUp and FluentMigrator, and found them both to work well.

So if a database migration task isn't a good candidate for an app startup task example, what is?

Better examples of startup tasks

I mentioned a couple of other examples in my posts, but I describe them again below. There's also a couple of interesting additions (courtesy of Ruben Bartelink):

- Checking your strongly-typed configuration is valid. ASP.NET Core 2.2 introduced Options validation, but it only does this on first accessing the

IOptions<T>classes. As I described in a previous post, you will probably want to perform eager validation on app startup to confirm your environment and configuration are valid. - Populating a cache. Your application might require data from the filesystem or a remote service that it only needs to load once, but that is relatively expensive to load. Loading this data before the app starts prevents a request being burdened with that latency.

- Pre-connecting to databases and/or external services. In a similar manner, you could populate the database connection pool by connecting to your database or other external services. These are generally relatively expensive operations, so are great use cases.

- Pre-JITing and loading assemblies by instantiating all the singletons in your application. I think this is a very interesting idea - by loading all the Singletons registered with your DI container on app startup, you can potentially avoid a lot of expensive JIT compiling and assembly loading from happening in the context of a request.

As with all of these examples, the benefits you'll see will be highly dependent on the characteristics of your particular app. That said, the final suggestion, pre-instantiating all the singletons in your application particularly piqued my interest, so I'll show how to achieve that in a later post.

An alternative approach using health checks

I completely agree with the feedback on database migrations. It's somewhat misleading to use them as an example of a startup task when that's not a good idea, especially as I personally don't use the approach I described anyway!

Nevertheless, lots of people agreed with the overall approach I described; that of running startup tasks before starting the Kestrel server, and handling requests.

One exception was Damian Hickey who suggested that his preference is to start Kestrel as soon as possible. He suggested using health check endpoints to signal to a load balancer that the application is ready to start receiving requests once all the startup tasks have completed. In the meantime, all non-health-check traffic (there shouldn't be any if the load-balancer is doing it's job) would receive a 503 Service Unavailable.

The main benefit of this approach is that it avoids network timeouts. Generally speaking, it's better for a request to return quickly with an error code than for it to not respond at all, and cause the client to timeout. This reduces the number of resources required by the client. By starting Kestrel earlier, the application can start responding to requests earlier, even if the response is a "not ready" response.

This is actually pretty similar to an approach described in my first post, but which I discarded as both too complex, and not achieving the goals I set out. Technically in that post I was specifically looking at ways to run tasks before Kestrel starts, which the health-check approach wouldn't do.

However, the fact that Damian specifically prefers to run Kestrel first has made me think again. I can't think of any practical examples of tasks or other reasons that would require tasks to be complete before Kestrel starts; I can only think of tasks that need to be run before regular traffic reaches the app, which is achieved with both approaches.

So, in my next post, I'll show an example of how to take this approach, and use the ASP.NET Core 2.2 health checks to signal to a load balancer when an application has completed all its startup tasks.

Summary

In this post I shared some feedback on my previous posts about running async tasks on app startup. The biggest issue was my choice to use EF Core database migrations as an example of a startup task. Database migrations aren't well suited to running on app startup as they typically need to be run by a single process, and require more database permissions than a normal web app would.

I provided some examples of better startup tasks, as well as a suggestion for a different approach, which starts Kestrel as soon as possible, and uses health checks to signal when the tasks are complete. I'll show an example of this in my next post.

Thanks to everyone that provided feedback on the posts, especially Ruben and Damian.