In my previous post I updated my comparison between ImageSharp and CoreCompat.System.Drawing. Instead of loading an arbitrary file from a URL using the HttpClient, the path to a file in the wwwroot folder was provided as part of the URL path.

This approach falls more in line with use cases you've likely run into many times - an image is uploaded at a particular size and you need to dynamically resize it to arbitrary dimensions. I've been using ImageSharp to do this - a new image library that runs on netstandard1.1 and is written entirely in managed code, so is fully cross-platform.

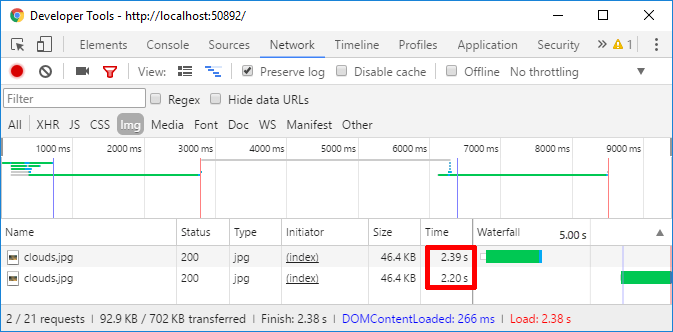

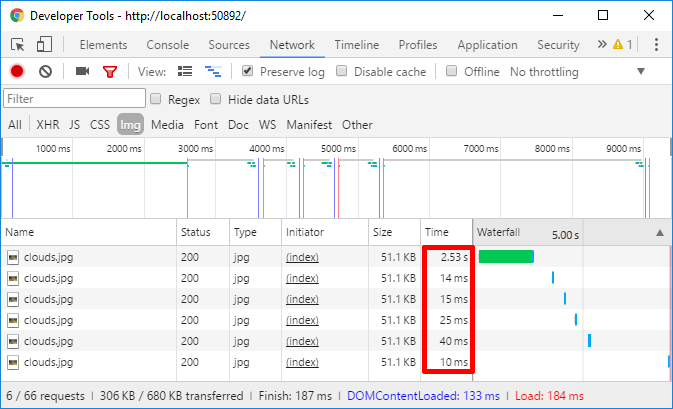

The code from the previous post fulfils this role, allowing you to arbitrarily resize images in your website. The biggest problem with the code as-is is how long it takes to process an image - large images could take multiple seconds to be loaded, processed, and served, as you can see below

This post shows how to add IDistributedCache to the implementation to quickly improve the response time for serving resized images.

IDistributeCache and IMemoryDistributedCache

The most obvious option here is to cache the resized image after it's first processed, and just serve the resized image from cache from subsequent requests. ASP.NET Core includes a general caching infrastructure in the form of the IDistributedCache interface. It's used by various parts of the framework, whenever caching is needed.

The IDistributedCache interface provides a number of methods related to saving and loading byte arrays by a string key, the pertinent ones of which are shown below:

public interface IDistributedCache

{

Task<byte[]> GetAsync(string key);

Task SetAsync(string key, byte[] value, DistributedCacheEntryOptions options);

}

In addition, there is an extension method that provides a simplified version of the SetAsync method, without DistributedCacheEntryOptions:

public static void Set(this IDistributedCache cache, string key, byte[] value)

{

cache.Set(key, value, new DistributedCacheEntryOptions());

}

There are a number of different implementations of IDistributedCache that you can use to store data in Redis, SqlServer and other stores, but by default, ASP.NET Core registers an in-memory cache, MemoryDistributedCache, which uses the IMemoryCache under the hood. This essentially caches data in a dictionary in-process. Normally, you would want to replace this with an actually distributed cache, but for our purposes, it should do the job nicely.

Loading data from an IDistributedCache

The first step to adding caching, is to decide on a key to use. For our case, that's quite simple - we can combine the requested path with the requested image dimensions. We can then try and load the image from the cache. If we get a hit, we can use the cached byte[] data to create a FileResult directly, instead of having to load and resize the image again:

public class HomeController : Controller

{

private readonly IFileProvider _fileProvider;

private readonly IDistributedCache _cache;

public HomeController(IHostingEnvironment env, IDistributedCache cache)

{

_fileProvider = env.WebRootFileProvider;

_cache = cache;

}

[Route("/image/{width}/{height}/{*url}")]

public async Task<IActionResult> ResizeImage(string url, int width, int height)

{

if (width < 0 || height < 0) { return BadRequest(); }

var key = $"/{width}/{height}/{url}";

var data = await _cache.GetAsync(key);

if (data == null)

{

// resize image and cache it

}

return File(data, "image/jpg");

}

All that remains is to add the set-cache code. This code is very similar to the previous post, the only difference being that we need to create a byte[] of data to cache, instead of passing a Stream to the FileResult, as we did in the previous post.

Saving resized images in an IDistributedCache

Saving data to the IDistributedCache is very simple - you simply provide the string key and the data as byte[]. We'll reuse most of the code from the previous post here - checking the requested image exists, reading it into memory, resizing it and saving it to an output stream. The only difference is that we call ToArray() on the MemoryStream to get a byte[] we can store in cache.

var imagePath = PathString.FromUriComponent("/" + url);

var fileInfo = _fileProvider.GetFileInfo(imagePath);

if (!fileInfo.Exists) { return NotFound(); }

using (var outputStream = new MemoryStream())

{

using (var inputStream = fileInfo.CreateReadStream())

using (var image = Image.Load(inputStream))

{

image

.Resize(width, height)

.SaveAsJpeg(outputStream);

}

data = outputStream.ToArray();

}

await _cache.SetAsync(key, data);

And that's it, we're done - let's take it for a spin.

Testing it out

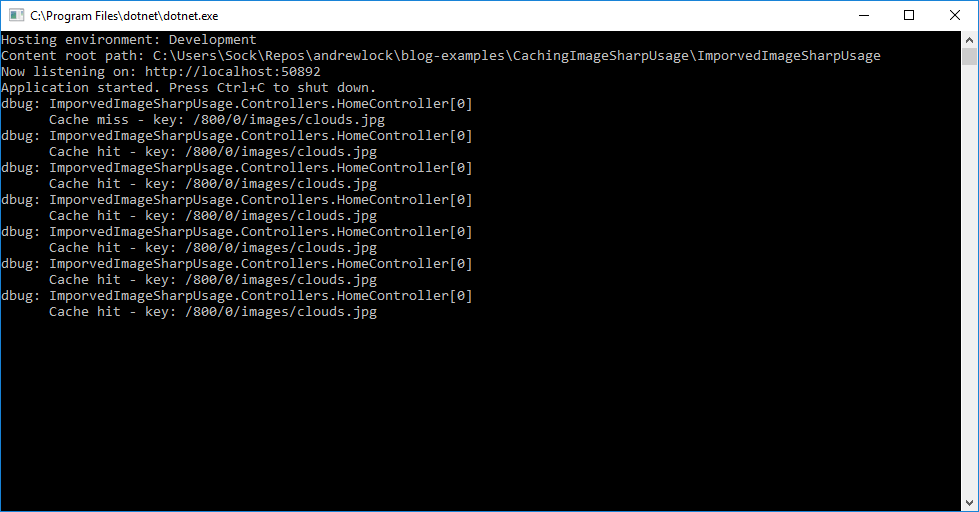

The first time we request an image, the cache is empty, so we still have to check the image exists, load it up, resize it, and store it in the cache. This is the same process as we had before, so the first request for a resized image is always going to be slow:

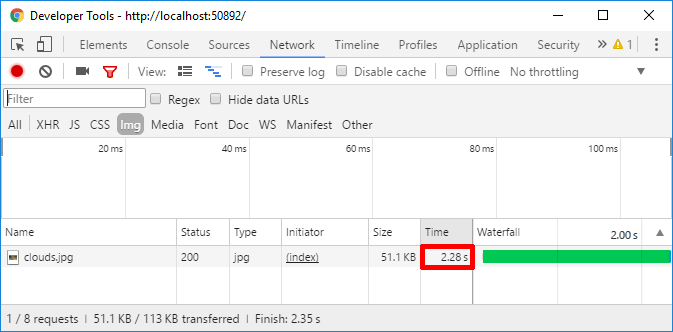

If we reload the page however, you can see that our subsequent requests are much better - we're down from 2+ seconds to 10ms in some cases!

This is clearly a vast improvement, and suddenly makes the approach of resizing on-the-fly a viable option. If we want, we can add some logging to our method to confirm that we are in fact pulling the data from the cache:

Protecting against DOS attacks

While we have a working, cached version of our resizing action, there is still one particular aspect we haven't covered that was raised by Bertrand Le Roy in the last post. With the current implementation, you can resize to arbitrary dimensions, which opens the app up to Denial of Service (DOS) attacks.

A malicious user could use significant server resources by requesting multiple different sizes for a resized image, e.g.

- 640×480-

/640/480/images/clouds.jpg - 640×481-

/640/481/images/clouds.jpg - 640×482-

/640/482/images/clouds.jpg - 641×480-

/641/480/images/clouds.jpg - 642×480 -

/642/480/images/clouds.jpg - etc

With the current design, each of those requests would trigger an expensive resize operation, as well as caching the result in the IDistributedCache. Hopefully it's clear that could end up being a problem - your server ends up using a significant amount of CPU resizing the images, and a large amount of memory caching every slight variation.

There are a number of ways you could get round this, all centred around limiting the number of "acceptable" image sizes, for example:

- Only allow n specific, fixed sizes, e.g. 640×480, 960×720 etc.

- Only allow n specific dimensions e.g 640, 720 and 960, but allow any combination of these e.g. 640×640, 640×720 etc.

- Only allow you to specify the dimension in one direction, e.g. height=640 or width=640, and automatically scale the other dimension to keep the correct aspect ratio

Limiting the number of supported sizes like this means you also need to decide what to do if an unsupported size is requested. The easiest solution is to just return a 404 NotFound, but that's not necessarily the most user-friendly.

An alternative approach is to always return the smallest supported size that is larger than the requested size. For example, if we only support 640×480, 960×720, then:

- if 640×480 is requested, return 640×480

- if 480×320 is requested, return 640×480

- if 720×540 is requested, return 960×720

- if 1280×960 is requested, return ?

We still have a question of what to return for the last point, which requests a size larger than our largest supported size, but you would probably just return the biggest size you can here.

Exactly which approach you choose is obviously up to you. As an example, I've updated the ResizeImage action method resize method to ensure that either width or height is always 0, to preserve image aspect ratio. The SanitizeSize method is shown afterwards

[Route("/image/{width}/{height}/{*url}")]

public async Task<IActionResult> ResizeImage(string url, int width, int height)

{

if (width < 0 || height < 0) { return BadRequest(); }

if (width == 0 && height == 0) { return BadRequest(); }

if(height == 0)

{

width = SanitizeSize(width);

}

else

{

width = 0;

height = SanitizeSize(height);

}

// remainder of method

}

For the SanitizeSize method, I've chosen to have 3 fixed sizes, where the smallest size larger than the requested size is used, or the largest size (1280) if you request larger than this.

private static int[] SupportedSizes = { 480, 960, 1280};

private int SanitizeSize(int value)

{

if (value >= 1280) { return 1280; }

return SupportedSizes.First(size => size >= value);

}

With this in place, you can only request 6 different sizes for each image - 480, 960, 1280 width or 480, 960, 1280 height. The other dimension will have whatever value preserves the aspect ratio.

This provides you with simple protection from DOS attacks. It does, however, raise the question of whether it is worth doing this work in a web request at all. If you only have fixed supported sizes, then resizing the images at compile time and saving as files might make more sense. That way you avoid all the overhead of resizing images at runtime. Anyway, I digress, this covers you from DOS attacks anyway!

Working with other cache implementations

In this example, I simply used one of the the most basic of caching options available. However, as this code depends on the general IDistributedCache interface, we can easily extend it. If we wanted to, we could replace the MemoryDistributedCache implementation with a RedisCache, which would allow a whole cluster of web servers to only resize an image once, instead of having to resize it once per-server.

Adding caching to your code really can be as simple as this example, especially when you have immutable data as I do. I don't need to worry about images getting stale - I'm assuming you're not going to be adding images to your wwwroot folder when the server is in production - so caching is pretty simple. Obviously, as soon you have to worry about cache invalidation, things get way more complicated.

An alternative approach

This caching approach works, but there's one thing that slightly bugs me. Even though we're not resizing the image on every request, we're still serving the whole data every time. Notice how in the last screen shot every response is identical - a 200 response and 51.1KB of data?

Response caching is a standard approach to getting around this issue - instead of returning the whole data, the server sends some cache headers to the browser with the original data. On subsequent requests, the server can check the headers sent back by the browser, and if nothing's changed, can send a 304 response telling the browser to just use the data it has cached.

Now, we could add this functionality to our existing method, but in my next post I'll look at an alternative approach to caching which lets us achieve this without having to write the code ourselves.

Summary

Adding caching to a method is a very simple way to speed up long-running requests. In this case, we used the IDistributedCache to avoid having to resize an image every time it is requested. Instead, we store the byte[] data with a unique key the first time it is requested, and store this is in the cache. Subsequent requests for the resized image can just reload the cached data.